[足式机器人]Part2 Dr. CAN学习笔记-Ch0-1矩阵的导数运算

本文仅供学习使用

本文参考:

B站:DR_CAN

Dr. CAN学习笔记-Ch0-1矩阵的导数运算

- 1. 标量向量方程对向量求导,分母布局,分子布局

- 1.1 标量方程对向量的导数

- 1.2 向量方程对向量的导数

- 2. 案例分析,线性回归

- 3. 矩阵求导的链式法则

1. 标量向量方程对向量求导,分母布局,分子布局

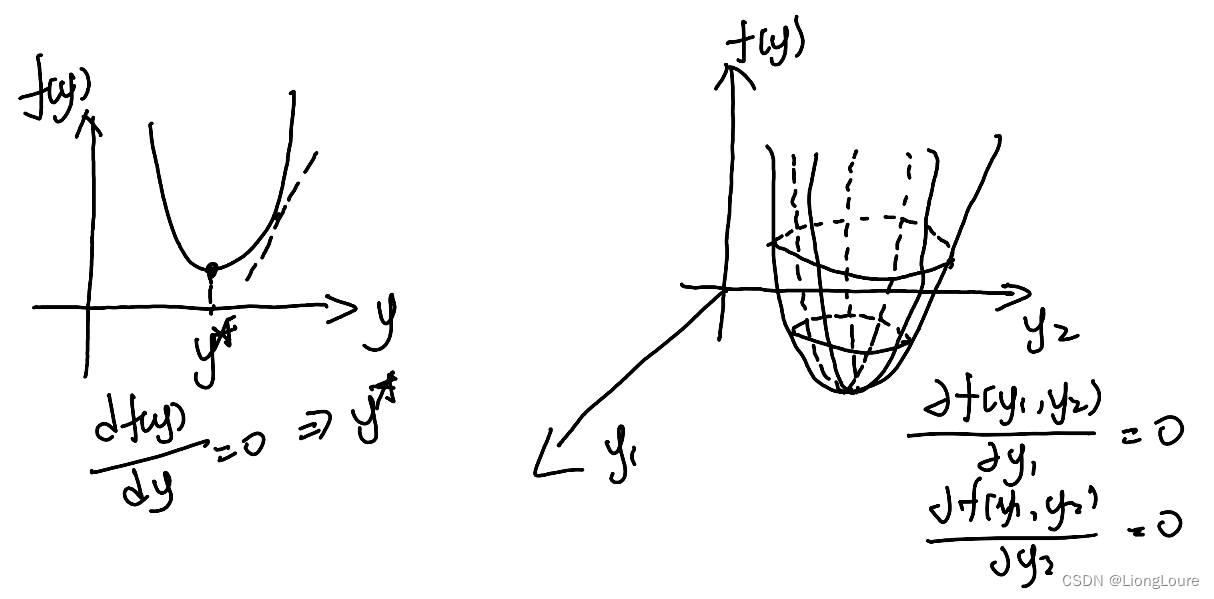

1.1 标量方程对向量的导数

-

y

y

y 为 一元向量 或 二元向量

-

y

y

y为多元向量

y ⃗ = [ y 1 , y 2 , ⋯ , y n ] ⇒ ∂ f ( y ⃗ ) ∂ y ⃗ \vec{y}=\left[ y_1,y_2,\cdots ,y_{\mathrm{n}} \right] \Rightarrow \frac{\partial f\left( \vec{y} \right)}{\partial \vec{y}} y=[y1,y2,⋯,yn]⇒∂y∂f(y)

其中: f ( y ⃗ ) f\left( \vec{y} \right) f(y) 为标量 1 × 1 1\times 1 1×1, y ⃗ \vec{y} y为向量 1 × n 1\times n 1×n

分母布局 Denominator Layout——行数与分母相同

∂ f ( y ⃗ ) ∂ y ⃗ = [ ∂ f ( y ⃗ ) ∂ y 1 ⋮ ∂ f ( y ⃗ ) ∂ y n ] n × 1 \frac{\partial f\left( \vec{y} \right)}{\partial \vec{y}}=\left[ \begin{array}{c} \frac{\partial f\left( \vec{y} \right)}{\partial y_1}\\ \vdots\\ \frac{\partial f\left( \vec{y} \right)}{\partial y_{\mathrm{n}}}\\ \end{array} \right] _{n\times 1} ∂y∂f(y)= ∂y1∂f(y)⋮∂yn∂f(y) n×1分子布局 Nunerator Layout——行数与分子相同

∂ f ( y ⃗ ) ∂ y ⃗ = [ ∂ f ( y ⃗ ) ∂ y 1 ⋯ ∂ f ( y ⃗ ) ∂ y n ] 1 × n \frac{\partial f\left( \vec{y} \right)}{\partial \vec{y}}=\left[ \begin{matrix} \frac{\partial f\left( \vec{y} \right)}{\partial y_1}& \cdots& \frac{\partial f\left( \vec{y} \right)}{\partial y_{\mathrm{n}}}\\ \end{matrix} \right] _{1\times n} ∂y∂f(y)=[∂y1∂f(y)⋯∂yn∂f(y)]1×n

1.2 向量方程对向量的导数

f

⃗

(

y

⃗

)

=

[

f

⃗

1

(

y

⃗

)

⋮

f

⃗

n

(

y

⃗

)

]

n

×

1

,

y

⃗

=

[

y

1

⋮

y

m

]

m

×

1

\vec{f}\left( \vec{y} \right) =\left[ \begin{array}{c} \vec{f}_1\left( \vec{y} \right)\\ \vdots\\ \vec{f}_{\mathrm{n}}\left( \vec{y} \right)\\ \end{array} \right] _{n\times 1},\vec{y}=\left[ \begin{array}{c} y_1\\ \vdots\\ y_{\mathrm{m}}\\ \end{array} \right] _{\mathrm{m}\times 1}

f(y)=

f1(y)⋮fn(y)

n×1,y=

y1⋮ym

m×1

∂

f

⃗

(

y

⃗

)

n

×

1

∂

y

⃗

m

×

1

=

[

∂

f

⃗

(

y

⃗

)

∂

y

1

⋮

∂

f

⃗

(

y

⃗

)

∂

y

m

]

m

×

1

=

[

∂

f

1

(

y

⃗

)

∂

y

1

⋯

∂

f

n

(

y

⃗

)

∂

y

1

⋮

⋱

⋮

∂

f

1

(

y

⃗

)

∂

y

m

⋯

∂

f

n

(

y

⃗

)

∂

y

m

]

m

×

n

\frac{\partial \vec{f}\left( \vec{y} \right) _{n\times 1}}{\partial \vec{y}_{\mathrm{m}\times 1}}=\left[ \begin{array}{c} \frac{\partial \vec{f}\left( \vec{y} \right)}{\partial y_1}\\ \vdots\\ \frac{\partial \vec{f}\left( \vec{y} \right)}{\partial y_{\mathrm{m}}}\\ \end{array} \right] _{\mathrm{m}\times 1}=\left[ \begin{matrix} \frac{\partial f_1\left( \vec{y} \right)}{\partial y_1}& \cdots& \frac{\partial f_{\mathrm{n}}\left( \vec{y} \right)}{\partial y_1}\\ \vdots& \ddots& \vdots\\ \frac{\partial f_1\left( \vec{y} \right)}{\partial y_{\mathrm{m}}}& \cdots& \frac{\partial f_{\mathrm{n}}\left( \vec{y} \right)}{\partial y_{\mathrm{m}}}\\ \end{matrix} \right] _{\mathrm{m}\times \mathrm{n}}

∂ym×1∂f(y)n×1=

∂y1∂f(y)⋮∂ym∂f(y)

m×1=

∂y1∂f1(y)⋮∂ym∂f1(y)⋯⋱⋯∂y1∂fn(y)⋮∂ym∂fn(y)

m×n, 为分母布局

若: y ⃗ = [ y 1 ⋮ y m ] m × 1 , A = [ a 11 ⋯ a 1 n ⋮ ⋱ ⋮ a m 1 ⋯ a m n ] \vec{y}=\left[ \begin{array}{c} y_1\\ \vdots\\ y_{\mathrm{m}}\\ \end{array} \right] _{\mathrm{m}\times 1}, A=\left[ \begin{matrix} a_{11}& \cdots& a_{1\mathrm{n}}\\ \vdots& \ddots& \vdots\\ a_{\mathrm{m}1}& \cdots& a_{\mathrm{mn}}\\ \end{matrix} \right] y= y1⋮ym m×1,A= a11⋮am1⋯⋱⋯a1n⋮amn , 则有:

- ∂ A y ⃗ ∂ y ⃗ = A T \frac{\partial A\vec{y}}{\partial \vec{y}}=A^{\mathrm{T}} ∂y∂Ay=AT(分母布局)

- ∂ y ⃗ T A y ⃗ ∂ y ⃗ = A y ⃗ + A T y ⃗ \frac{\partial \vec{y}^{\mathrm{T}}A\vec{y}}{\partial \vec{y}}=A\vec{y}+A^{\mathrm{T}}\vec{y} ∂y∂yTAy=Ay+ATy, 当 A = A T A=A^{\mathrm{T}} A=AT时, ∂ y ⃗ T A y ⃗ ∂ y ⃗ = 2 A y ⃗ \frac{\partial \vec{y}^{\mathrm{T}}A\vec{y}}{\partial \vec{y}}=2A\vec{y} ∂y∂yTAy=2Ay

若为分子布局,则有: ∂ A y ⃗ ∂ y ⃗ = A \frac{\partial A\vec{y}}{\partial \vec{y}}=A ∂y∂Ay=A

2. 案例分析,线性回归

- ∂ A y ⃗ ∂ y ⃗ = A T \frac{\partial A\vec{y}}{\partial \vec{y}}=A^{\mathrm{T}} ∂y∂Ay=AT(分母布局)

- ∂ y ⃗ T A y ⃗ ∂ y ⃗ = A y ⃗ + A T y ⃗ \frac{\partial \vec{y}^{\mathrm{T}}A\vec{y}}{\partial \vec{y}}=A\vec{y}+A^{\mathrm{T}}\vec{y} ∂y∂yTAy=Ay+ATy, 当 A = A T A=A^{\mathrm{T}} A=AT时, ∂ y ⃗ T A y ⃗ ∂ y ⃗ = 2 A y ⃗ \frac{\partial \vec{y}^{\mathrm{T}}A\vec{y}}{\partial \vec{y}}=2A\vec{y} ∂y∂yTAy=2Ay

Linear Regression 线性回归

z

^

=

y

1

+

y

2

x

⇒

J

=

∑

i

=

1

n

[

z

i

−

(

y

1

+

y

2

x

i

)

]

2

\hat{z}=y_1+y_2x\Rightarrow J=\sum_{i=1}^n{\left[ z_i-\left( y_1+y_2x_i \right) \right] ^2}

z^=y1+y2x⇒J=i=1∑n[zi−(y1+y2xi)]2

找到

y

1

,

y

2

y_1,y_2

y1,y2 使得

J

J

J最小

z

⃗

=

[

z

1

⋮

z

n

]

,

[

x

⃗

]

=

[

1

x

1

⋮

⋮

1

x

n

]

,

y

⃗

=

[

y

1

y

2

]

⇒

z

⃗

^

=

[

x

⃗

]

y

⃗

=

[

y

1

+

y

2

x

1

⋮

y

1

+

y

2

x

n

]

\vec{z}=\left[ \begin{array}{c} z_1\\ \vdots\\ z_{\mathrm{n}}\\ \end{array} \right] ,\left[ \vec{x} \right] =\left[ \begin{array}{l} 1& x_1\\ \vdots& \vdots\\ 1& x_{\mathrm{n}}\\ \end{array} \right] ,\vec{y}=\left[ \begin{array}{c} y_1\\ y_2\\ \end{array} \right] \Rightarrow \hat{\vec{z}}=\left[ \vec{x} \right] \vec{y}=\left[ \begin{array}{c} y_1+y_2x_1\\ \vdots\\ y_1+y_2x_{\mathrm{n}}\\ \end{array} \right]

z=

z1⋮zn

,[x]=

1⋮1x1⋮xn

,y=[y1y2]⇒z^=[x]y=

y1+y2x1⋮y1+y2xn

J

=

[

z

⃗

−

z

⃗

^

]

T

[

z

⃗

−

z

⃗

^

]

=

[

z

⃗

−

[

x

⃗

]

y

⃗

]

T

[

z

⃗

−

[

x

⃗

]

y

⃗

]

=

z

⃗

z

⃗

T

−

z

⃗

T

[

x

⃗

]

y

⃗

−

y

⃗

T

[

x

⃗

]

T

z

⃗

+

y

⃗

T

[

x

⃗

]

T

[

x

⃗

]

y

⃗

J=\left[ \vec{z}-\hat{\vec{z}} \right] ^{\mathrm{T}}\left[ \vec{z}-\hat{\vec{z}} \right] =\left[ \vec{z}-\left[ \vec{x} \right] \vec{y} \right] ^{\mathrm{T}}\left[ \vec{z}-\left[ \vec{x} \right] \vec{y} \right] =\vec{z}\vec{z}^{\mathrm{T}}-\vec{z}^{\mathrm{T}}\left[ \vec{x} \right] \vec{y}-\vec{y}^{\mathrm{T}}\left[ \vec{x} \right] ^{\mathrm{T}}\vec{z}+\vec{y}^{\mathrm{T}}\left[ \vec{x} \right] ^{\mathrm{T}}\left[ \vec{x} \right] \vec{y}

J=[z−z^]T[z−z^]=[z−[x]y]T[z−[x]y]=zzT−zT[x]y−yT[x]Tz+yT[x]T[x]y

其中:

(

z

⃗

T

[

x

⃗

]

y

⃗

)

T

=

y

⃗

T

[

x

⃗

]

T

z

⃗

\left( \vec{z}^{\mathrm{T}}\left[ \vec{x} \right] \vec{y} \right) ^{\mathrm{T}}=\vec{y}^{\mathrm{T}}\left[ \vec{x} \right] ^{\mathrm{T}}\vec{z}

(zT[x]y)T=yT[x]Tz, 则有:

J

=

z

⃗

z

⃗

T

−

2

z

⃗

T

[

x

⃗

]

y

⃗

+

y

⃗

T

[

x

⃗

]

T

[

x

⃗

]

y

⃗

J=\vec{z}\vec{z}^{\mathrm{T}}-2\vec{z}^{\mathrm{T}}\left[ \vec{x} \right] \vec{y}+\vec{y}^{\mathrm{T}}\left[ \vec{x} \right] ^{\mathrm{T}}\left[ \vec{x} \right] \vec{y}

J=zzT−2zT[x]y+yT[x]T[x]y

进而:

∂

J

∂

y

⃗

=

0

−

2

(

z

⃗

T

[

x

⃗

]

)

T

+

2

[

x

⃗

]

T

[

x

⃗

]

y

⃗

=

∇

y

⃗

⟹

∂

J

∂

y

⃗

∗

=

0

,

y

⃗

∗

=

(

[

x

⃗

]

T

[

x

⃗

]

)

−

1

[

x

⃗

]

T

z

⃗

\frac{\partial J}{\partial \vec{y}}=0-2\left( \vec{z}^{\mathrm{T}}\left[ \vec{x} \right] \right) ^{\mathrm{T}}+2\left[ \vec{x} \right] ^{\mathrm{T}}\left[ \vec{x} \right] \vec{y}=\nabla \vec{y}\Longrightarrow \frac{\partial J}{\partial \vec{y}^*}=0,\vec{y}^*=\left( \left[ \vec{x} \right] ^{\mathrm{T}}\left[ \vec{x} \right] \right) ^{-1}\left[ \vec{x} \right] ^{\mathrm{T}}\vec{z}

∂y∂J=0−2(zT[x])T+2[x]T[x]y=∇y⟹∂y∗∂J=0,y∗=([x]T[x])−1[x]Tz

其中:

(

[

x

⃗

]

T

[

x

⃗

]

)

−

1

\left( \left[ \vec{x} \right] ^{\mathrm{T}}\left[ \vec{x} \right] \right) ^{-1}

([x]T[x])−1不一定有解,则

y

⃗

∗

\vec{y}^*

y∗无法得到解析解——定义初始

y

⃗

∗

\vec{y}^*

y∗,

y

⃗

∗

=

y

⃗

∗

−

α

∇

,

α

=

[

α

1

0

0

α

2

]

\vec{y}^*=\vec{y}^*-\alpha \nabla ,\alpha =\left[ \begin{matrix} \alpha _1& 0\\ 0& \alpha _2\\ \end{matrix} \right]

y∗=y∗−α∇,α=[α100α2]

其中:

α

\alpha

α称为学习率,对

x

x

x而言则需进行归一化

3. 矩阵求导的链式法则

标量函数: J = f ( y ( u ) ) , ∂ J ∂ u = ∂ J ∂ y ∂ y ∂ u J=f\left( y\left( u \right) \right) ,\frac{\partial J}{\partial u}=\frac{\partial J}{\partial y}\frac{\partial y}{\partial u} J=f(y(u)),∂u∂J=∂y∂J∂u∂y

标量对向量求导: J = f ( y ⃗ ( u ⃗ ) ) , y ⃗ = [ y 1 ( u ⃗ ) ⋮ y m ( u ⃗ ) ] m × 1 , u ⃗ = [ u ⃗ 1 ⋮ u ⃗ n ] n × 1 J=f\left( \vec{y}\left( \vec{u} \right) \right) ,\vec{y}=\left[ \begin{array}{c} y_1\left( \vec{u} \right)\\ \vdots\\ y_{\mathrm{m}}\left( \vec{u} \right)\\ \end{array} \right] _{m\times 1},\vec{u}=\left[ \begin{array}{c} \vec{u}_1\\ \vdots\\ \vec{u}_{\mathrm{n}}\\ \end{array} \right] _{\mathrm{n}\times 1} J=f(y(u)),y= y1(u)⋮ym(u) m×1,u= u1⋮un n×1

分析: ∂ J 1 × 1 ∂ u n × 1 n × 1 = ∂ J ∂ y m × 1 m × 1 ∂ y m × 1 ∂ u n × 1 n × m \frac{\partial J_{1\times 1}}{\partial u_{\mathrm{n}\times 1}}_{\mathrm{n}\times 1}=\frac{\partial J}{\partial y_{m\times 1}}_{m\times 1}\frac{\partial y_{m\times 1}}{\partial u_{\mathrm{n}\times 1}}_{\mathrm{n}\times \mathrm{m}} ∂un×1∂J1×1n×1=∂ym×1∂Jm×1∂un×1∂ym×1n×m 无法相乘

y ⃗ = [ y 1 ( u ⃗ ) y 2 ( u ⃗ ) ] 2 × 1 , u ⃗ = [ u ⃗ 1 u ⃗ 2 u ⃗ 3 ] 3 × 1 \vec{y}=\left[ \begin{array}{c} y_1\left( \vec{u} \right)\\ y_2\left( \vec{u} \right)\\ \end{array} \right] _{2\times 1},\vec{u}=\left[ \begin{array}{c} \vec{u}_1\\ \vec{u}_2\\ \vec{u}_3\\ \end{array} \right] _{3\times 1} y=[y1(u)y2(u)]2×1,u= u1u2u3 3×1

J = f ( y ⃗ ( u ⃗ ) ) , ∂ J ∂ u ⃗ = [ ∂ J ∂ u ⃗ 1 ∂ J ∂ u ⃗ 2 ∂ J ∂ u ⃗ 3 ] 3 × 1 ⟹ ∂ J ∂ u ⃗ 1 = ∂ J ∂ y 1 ∂ y 1 ( u ⃗ ) ∂ u ⃗ 1 + ∂ J ∂ y 2 ∂ y 2 ( u ⃗ ) ∂ u ⃗ 1 ∂ J ∂ u ⃗ 2 = ∂ J ∂ y 1 ∂ y 1 ( u ⃗ ) ∂ u ⃗ 2 + ∂ J ∂ y 2 ∂ y 2 ( u ⃗ ) ∂ u ⃗ 2 ∂ J ∂ u ⃗ 3 = ∂ J ∂ y 1 ∂ y 1 ( u ⃗ ) ∂ u ⃗ 3 + ∂ J ∂ y 2 ∂ y 2 ( u ⃗ ) ∂ u ⃗ 3 ⟹ ∂ J ∂ u ⃗ = [ ∂ y 1 ( u ⃗ ) ∂ u ⃗ 1 ∂ y 2 ( u ⃗ ) ∂ u ⃗ 1 ∂ y 1 ( u ⃗ ) ∂ u ⃗ 2 ∂ y 2 ( u ⃗ ) ∂ u ⃗ 2 ∂ y 1 ( u ⃗ ) ∂ u ⃗ 3 ∂ y 2 ( u ⃗ ) ∂ u ⃗ 3 ] 3 × 2 [ ∂ J ∂ y 1 ∂ J ∂ y 2 ] 2 × 2 = ∂ y ⃗ ( u ⃗ ) ∂ u ⃗ ∂ J ∂ y ⃗ J=f\left( \vec{y}\left( \vec{u} \right) \right) ,\frac{\partial J}{\partial \vec{u}}=\left[ \begin{array}{c} \frac{\partial J}{\partial \vec{u}_1}\\ \frac{\partial J}{\partial \vec{u}_2}\\ \frac{\partial J}{\partial \vec{u}_3}\\ \end{array} \right] _{3\times 1}\Longrightarrow \begin{array}{c} \frac{\partial J}{\partial \vec{u}_1}=\frac{\partial J}{\partial y_1}\frac{\partial y_1\left( \vec{u} \right)}{\partial \vec{u}_1}+\frac{\partial J}{\partial y_2}\frac{\partial y_2\left( \vec{u} \right)}{\partial \vec{u}_1}\\ \frac{\partial J}{\partial \vec{u}_2}=\frac{\partial J}{\partial y_1}\frac{\partial y_1\left( \vec{u} \right)}{\partial \vec{u}_2}+\frac{\partial J}{\partial y_2}\frac{\partial y_2\left( \vec{u} \right)}{\partial \vec{u}_2}\\ \frac{\partial J}{\partial \vec{u}_3}=\frac{\partial J}{\partial y_1}\frac{\partial y_1\left( \vec{u} \right)}{\partial \vec{u}_3}+\frac{\partial J}{\partial y_2}\frac{\partial y_2\left( \vec{u} \right)}{\partial \vec{u}_3}\\ \end{array} \\ \Longrightarrow \frac{\partial J}{\partial \vec{u}}=\left[ \begin{array}{l} \frac{\partial y_1\left( \vec{u} \right)}{\partial \vec{u}_1}& \frac{\partial y_2\left( \vec{u} \right)}{\partial \vec{u}_1}\\ \frac{\partial y_1\left( \vec{u} \right)}{\partial \vec{u}_2}& \frac{\partial y_2\left( \vec{u} \right)}{\partial \vec{u}_2}\\ \frac{\partial y_1\left( \vec{u} \right)}{\partial \vec{u}_3}& \frac{\partial y_2\left( \vec{u} \right)}{\partial \vec{u}_3}\\ \end{array} \right] _{3\times 2}\left[ \begin{array}{c} \frac{\partial J}{\partial y_1}\\ \frac{\partial J}{\partial y_2}\\ \end{array} \right] _{2\times 2}=\frac{\partial \vec{y}\left( \vec{u} \right)}{\partial \vec{u}}\frac{\partial J}{\partial \vec{y}} J=f(y(u)),∂u∂J= ∂u1∂J∂u2∂J∂u3∂J 3×1⟹∂u1∂J=∂y1∂J∂u1∂y1(u)+∂y2∂J∂u1∂y2(u)∂u2∂J=∂y1∂J∂u2∂y1(u)+∂y2∂J∂u2∂y2(u)∂u3∂J=∂y1∂J∂u3∂y1(u)+∂y2∂J∂u3∂y2(u)⟹∂u∂J= ∂u1∂y1(u)∂u2∂y1(u)∂u3∂y1(u)∂u1∂y2(u)∂u2∂y2(u)∂u3∂y2(u) 3×2[∂y1∂J∂y2∂J]2×2=∂u∂y(u)∂y∂J

∂ J ∂ u ⃗ = ∂ y ⃗ ( u ⃗ ) ∂ u ⃗ ∂ J ∂ y ⃗ \frac{\partial J}{\partial \vec{u}}=\frac{\partial \vec{y}\left( \vec{u} \right)}{\partial \vec{u}}\frac{\partial J}{\partial \vec{y}} ∂u∂J=∂u∂y(u)∂y∂J

eg:

x

⃗

[

k

+

1

]

=

A

x

⃗

[

k

]

+

B

u

⃗

[

k

]

,

J

=

x

⃗

T

[

k

+

1

]

x

⃗

[

k

+

1

]

\vec{x}\left[ k+1 \right] =A\vec{x}\left[ k \right] +B\vec{u}\left[ k \right] ,J=\vec{x}^{\mathrm{T}}\left[ k+1 \right] \vec{x}\left[ k+1 \right]

x[k+1]=Ax[k]+Bu[k],J=xT[k+1]x[k+1]

∂

J

∂

u

⃗

=

∂

x

⃗

[

k

+

1

]

∂

u

⃗

∂

J

∂

x

⃗

[

k

+

1

]

=

B

T

⋅

2

x

⃗

[

k

+

1

]

=

2

B

T

x

⃗

[

k

+

1

]

\frac{\partial J}{\partial \vec{u}}=\frac{\partial \vec{x}\left[ k+1 \right]}{\partial \vec{u}}\frac{\partial J}{\partial \vec{x}\left[ k+1 \right]}=B^{\mathrm{T}}\cdot 2\vec{x}\left[ k+1 \right] =2B^{\mathrm{T}}\vec{x}\left[ k+1 \right]

∂u∂J=∂u∂x[k+1]∂x[k+1]∂J=BT⋅2x[k+1]=2BTx[k+1]