llama.cpp部署(windows)

一、下载源码和模型

下载源码和模型

# 下载源码

git clone https://github.com/ggerganov/llama.cpp.git

# 下载llama-7b模型

git clone https://www.modelscope.cn/skyline2006/llama-7b.git

查看cmake版本:

D:\pyworkspace\llama_cpp\llama.cpp\build>cmake --version

cmake version 3.22.0-rc2

CMake suite maintained and supported by Kitware (kitware.com/cmake).二、开始build

# 进入llama.cpp目录

mkdir build

cd build

cmake ..

build信息

D:\pyworkspace\llama_cpp\llama.cpp\build>cmake ..

-- Building for: Visual Studio 16 2019

-- Selecting Windows SDK version 10.0.18362.0 to target Windows 10.0.22631.

-- The C compiler identification is MSVC 19.29.30137.0

-- The CXX compiler identification is MSVC 19.29.30137.0

-- Detecting C compiler ABI info

-- Detecting C compiler ABI info - done

-- Check for working C compiler: D:/Program Files (x86)/Microsoft Visual Studio/2019/Community/VC/Tools/MSVC/14.29.30133/bin/Hostx64/x64/cl.exe - skipped

-- Detecting C compile features

-- Detecting C compile features - done

-- Detecting CXX compiler ABI info

-- Detecting CXX compiler ABI info - done

-- Check for working CXX compiler: D:/Program Files (x86)/Microsoft Visual Studio/2019/Community/VC/Tools/MSVC/14.29.30133/bin/Hostx64/x64/cl.exe - skipped

-- Detecting CXX compile features

-- Detecting CXX compile features - done

-- Found Git: D:/Git/Git/cmd/git.exe (found version "2.29.2.windows.2")

-- Looking for pthread.h

-- Looking for pthread.h - not found

-- Found Threads: TRUE

-- CMAKE_SYSTEM_PROCESSOR: AMD64

-- CMAKE_GENERATOR_PLATFORM:

-- x86 detected

-- Performing Test HAS_AVX_1

-- Performing Test HAS_AVX_1 - Success

-- Performing Test HAS_AVX2_1

-- Performing Test HAS_AVX2_1 - Success

-- Performing Test HAS_FMA_1

-- Performing Test HAS_FMA_1 - Success

-- Performing Test HAS_AVX512_1

-- Performing Test HAS_AVX512_1 - Failed

-- Performing Test HAS_AVX512_2

-- Performing Test HAS_AVX512_2 - Failed

-- Configuring done

-- Generating done

-- Build files have been written to: D:/pyworkspace/llama_cpp/llama.cpp/build本地使用Realease会出现报错,修改为Debug进行build,这里会使用到visual studio进行build

cmake --build . --config Debugbuild信息

D:\pyworkspace\llama_cpp\llama.cpp\build>cmake --build . --config Debug

用于 .NET Framework 的 Microsoft (R) 生成引擎版本 16.11.2+f32259642

版权所有(C) Microsoft Corporation。保留所有权利。

Checking Build System

Generating build details from Git

-- Found Git: D:/Git/Git/cmd/git.exe (found version "2.29.2.windows.2")

Building Custom Rule D:/pyworkspace/llama_cpp/llama.cpp/common/CMakeLists.txt

build-info.cpp

build_info.vcxproj -> D:\pyworkspace\llama_cpp\llama.cpp\build\common\build_info.dir\Debug\build_info.lib

Building Custom Rule D:/pyworkspace/llama_cpp/llama.cpp/CMakeLists.txt

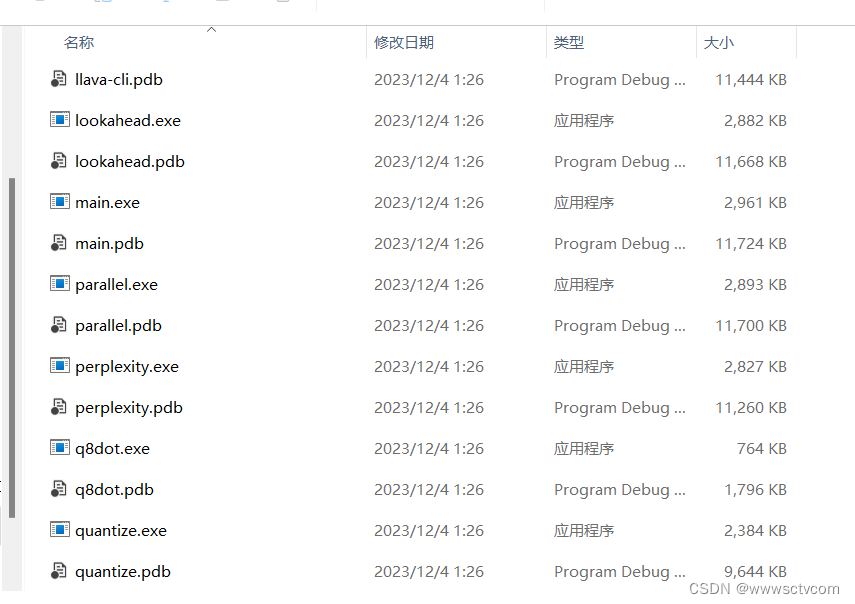

ggml.c在我本地D:\pyworkspace\llama_cpp\llama.cpp\build\bin\Debug目录下面产生了quantize.exe和main.exe等

三、量化和推理

安装相关python依赖

python -m pip install -r requirements.txt将下载好的llama-7b模型放入models目录下,并执行命令,会在llama-7b目录下面产生ggml-model-f16.gguf文件

python convert.py models/llama-7b/对产生的文件进行量化

D:\pyworkspace\llama_cpp\llama.cpp\build\bin\Debug\quantize.exe ./models/llama-7b/ggml-model-f16.gguf ./models/llama-7b/ggml-model-q4_0.gguf q4_0进行推理

D:\pyworkspace\llama_cpp\llama.cpp\build\bin\Debug\main.exe -m ./models/llama-7b/ggml-model-q4_0.gguf -n 256 --repeat_penalty 1.0 --color -i -r "User:" -f prompts/chat-with-bob.txt