一个完整的手工构建的cuda动态链接库工程 03记

1, 源代码

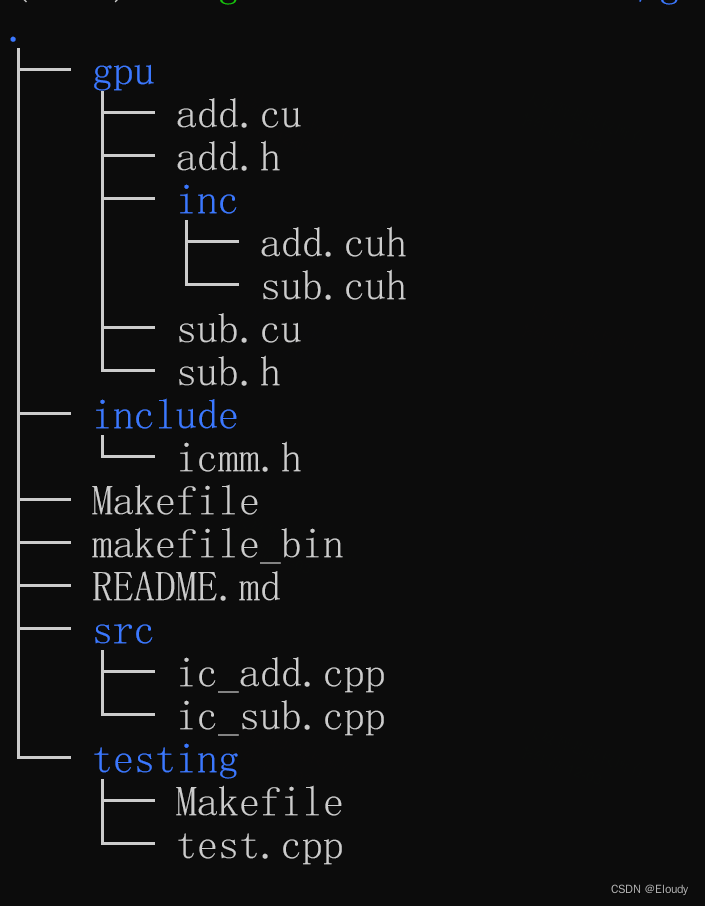

仅仅是加入了模板函数和对应的 .cuh文件,当前的目录结构如下:

icmm/gpu/add.cu

#include <stdio.h>

#include <cuda_runtime.h>

#include "inc/add.cuh"

// different name in this level for different typename, as extern "C" can not decorate template function that is in C++;

extern "C" void vector_add_gpu_s(float *A, float *B, float *C, int n)

{

dim3 grid, block;

block.x = 256;

grid.x = (n + block.x - 1) / block.x;

printf("CUDA kernel launch with %d blocks of %d threads\n", grid.x, block.x);

vector_add_kernel<><<<grid, block>>>(A, B, C, n);

}

extern "C" void vector_add_gpu_d(double* A, double* B, double* C, int n)

{

dim3 grid, block;

block.x = 256;

grid.x = (n + block.x - 1) / block.x;

printf("CUDA kernel launch with %d blocks of %d threads\n", grid.x, block.x);

vector_add_kernel<><<<grid, block>>>(A, B, C, n);

}

icmm/gpu/add.h

#pragma once

extern "C" void vector_add_gpu_s(float *A, float *B, float *C, int n);

extern "C" void vector_add_gpu_d(double* A, double* B, double* C, int n);

icmm/gpu/inc/add.cuh

#pragma once

template<typename T>

__global__ void vector_add_kernel(T *A, T *B, T *C, int n)

{

int i = blockDim.x * blockIdx.x + threadIdx.x;

if (i < n)

{

C[i] = A[i] + B[i] + 0.0f;

}

}

icmm/gpu/inc/sub.cuh

#pragma once

template<typename T>

__global__ void vector_sub_kernel(T *A, T *B, T *C, int n)

{

int i = blockDim.x * blockIdx.x + threadIdx.x;

if (i < n)

{

C[i] = A[i] - B[i] + 0.0f;

}

}

icmm/gpu/sub.cu

#include <stdio.h>

#include <cuda_runtime.h>

#include "inc/sub.cuh"

extern "C" void vector_sub_gpu_s(float *A, float *B, float *C, int n)

{

dim3 grid, block;

block.x = 256;

grid.x = (n + block.x - 1) / block.x;

printf("CUDA kernel launch with %d blocks of %d threads\n", grid.x, block.x);

vector_sub_kernel<><<<grid, block>>>(A, B, C, n);

}

extern "C" void vector_sub_gpu_d(double *A, double *B, double *C, int n)

{

dim3 grid, block;

block.x = 256;

grid.x = (n + block.x - 1) / block.x;

printf("CUDA kernel launch with %d blocks of %d threads\n", grid.x, block.x);

vector_sub_kernel<><<<grid, block>>>(A, B, C, n);

}

icmm/gpu/sub.h

#pragma once

extern "C" void vector_sub_gpu_s(float *A, float *B, float *C, int n);

extern "C" void vector_sub_gpu_d(double *A, double *B, double *C, int n);

icmm/include/icmm.h

#pragma once

#include<cuda_runtime.h>

void hello_print();

void ic_S_add(float* A, float* B, float *C, int n);

void ic_D_add(double* A, double* B, double* C, int n);

void ic_S_sub(float* A, float* B, float *C, int n);

void ic_D_sub(float* A, float* B, float *C, int n);

icmm/Makefile

#libicmm.so

TARGETS = libicmm.so

GPU_ARCH= -arch=sm_70

all: $(TARGETS)

sub.o: gpu/sub.cu

nvcc -Xcompiler -fPIC $(GPU_ARCH) -c $<

add.o: gpu/add.cu

nvcc -Xcompiler -fPIC $(GPU_ARCH) -c $<

#-dc

#-rdc=true

add_link.o: add.o

nvcc -Xcompiler -fPIC $(GPU_ARCH) -dlink -o $@ $< -L/usr/local/cuda/lib64 -lcudart -lcudadevrt

ic_add.o: src/ic_add.cpp

g++ -fPIC -c $< -L/usr/local/cuda/lib64 -I/usr/local/cuda/include -lcudart -lcudadevrt -I./

ic_sub.o: src/ic_sub.cpp

g++ -fPIC -c $< -L/usr/local/cuda/lib64 -I/usr/local/cuda/include -lcudart -lcudadevrt -I./

$(TARGETS): sub.o ic_sub.o add.o ic_add.o add_link.o

mkdir -p lib

g++ -shared -fPIC $^ -o lib/libicmm.so -I/usr/local/cuda/include -L/usr/local/cuda/lib64 -lcudart -lcudadevrt

-rm -f *.o

.PHONY:clean

clean:

-rm -f *.o lib/*.so test ./bin/test

-rm -rf lib bin

icmm/makefile_bin

# executable

TARGET = test

GPU_ARCH = -arch=sm_70

all: $(TARGET)

add.o: gpu/add.cu

nvcc -dc -rdc=true $(GPU_ARCH) -c $<

sub.o: gpu/sub.cu

nvcc -dc -rdc=true $(GPU_ARCH) -c $<

add_link.o: add.o

nvcc $(GPU_ARCH) -dlink -o $@ $< -L/usr/local/cuda/lib64 -lcudart -lcudadevrt

sub_link.o: sub.o

nvcc $(GPU_ARCH) -dlink -o $@ $< -L/usr/local/cuda/lib64 -lcudart -lcudadevrt

ic_add.o: src/ic_add.cpp

g++ -c $< -L/usr/local/cuda/lib64 -I/usr/local/cuda/include -lcudart -lcudadevrt -I./

ic_sub.o: src/ic_sub.cpp

g++ -c $< -L/usr/local/cuda/lib64 -I/usr/local/cuda/include -lcudart -lcudadevrt -I./

test.o: testing/test.cpp

g++ -c $< -I/usr/local/cuda/include -L/usr/local/cuda/lib64 -lcudart -lcudadevrt -I./include

test: sub.o ic_sub.o sub_link.o add.o ic_add.o test.o add_link.o

g++ $^ -L/usr/local/cuda/lib64 -lcudart -lcudadevrt -o test

mkdir ./bin

cp ./test ./bin/

-rm -f *.o

.PHONY:clean

clean:

-rm -f *.o bin/* $(TARGET)

icmm/src/ic_add.cpp

#include <stdio.h>

#include <cuda_runtime.h>

#include "gpu/add.h"

//extern void vector_add_gpu(float *A, float *B, float *C, int n);

void hello_print()

{

printf("hello world!\n");

}

//void ic_add(float* A, float* B, float *C, int n){ vector_add_gpu(A, B, C, n);}

void ic_S_add(float* A, float* B, float *C, int n)

{

vector_add_gpu_s(A, B, C, n);

}

void ic_D_add(double* A, double* B, double* C, int n)

{

vector_add_gpu_d(A, B, C, n);

}

icmm/src/ic_sub.cpp

#include <stdio.h>

#include <cuda_runtime.h>

#include "gpu/sub.h"

//extern void vector_add_gpu(float *A, float *B, float *C, int n);

void ic_S_sub(float* A, float* B, float *C, int n)

{

vector_sub_gpu_s(A, B, C, n);

}

void ic_D_sub(double* A, double* B, double *C, int n)

{

vector_sub_gpu_d(A, B, C, n);

}

icmm/testing/Makefile

#test

TARGET = test

all: $(TARGET)

CXX_FLAGS = -I/usr/local/cuda/include -L/usr/local/cuda/lib64 -lcudart -lcudadevrt -I../include -L../

test.o: test.cpp

g++ -c $< $(CXX_FLAGS)

$(TARGET):test.o

g++ $< -o $@ -L/usr/local/cuda/lib64 -lcudart -lcudadevrt -L../lib -licmm

@echo "to execute: export LD_LIBRARY_PATH=${PWD}/../lib"

.PHONY:clean

clean:

-rm -f *.o $(TARGET)

icmm/testing/test.cpp

#include <cuda_runtime.h>

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

#include "icmm.h"

void add_test_s(float* A, float* B, float* C, int n)

{

ic_S_add(A, B, C, n);

printf("Copy output data from the CUDA device to the host memory\n");

float* h_C = (float*)malloc(n*sizeof(float));

cudaMemcpy(h_C, C, n*sizeof(float), cudaMemcpyDeviceToHost);

for (int i = 0; i < n; ++i)

{

printf("%3.2f ", h_C[i]);

// if (fabs(h_A[i] + h_B[i] - h_C[i]) > 1e-5) { fprintf(stderr, "Result verification failed at element %d!\n", i); exit(EXIT_FAILURE); }

}

printf("\nTest PASSED\n");

free(h_C);

}

/**/

void add_test_d(double* A, double* B, double* C, int n)

{

ic_D_add(A, B, C, n);

printf("Copy output data from the CUDA device to the host memory\n");

float *h_C = (float *)malloc(n*sizeof(double));

cudaMemcpy(h_C, C, sizeof(double), cudaMemcpyDeviceToHost);

for (int i = 0; i < n; ++i)

{

printf("%3.2f ", h_C[i]);

// if (fabs(h_A[i] + h_B[i] - h_C[i]) > 1e-5) { fprintf(stderr, "Result verification failed at element %d!\n", i); exit(EXIT_FAILURE); }

}

printf("\nTest PASSED\n");

free(h_C);

}

/**/

void sub_test_s(float* A, float* B, float* C, int n)

{

ic_S_sub(A, B, C, n);

printf("Copy output data from the CUDA device to the host memory\n");

float* h_C = (float*)malloc(n*sizeof(float));

cudaMemcpy(h_C, C, n*sizeof(float), cudaMemcpyDeviceToHost);

for (int i = 0; i < n; ++i)

{

printf("%3.2f ", h_C[i]);

// if (fabs(h_A[i] + h_B[i] - h_C[i]) > 1e-5) { fprintf(stderr, "Result verification failed at element %d!\n", i); exit(EXIT_FAILURE); }

}

printf("\nTest PASSED\n");

free(h_C);

}

int main(void)

{

int n = 50;

size_t size = n * sizeof(float);

float *h_A = (float *)malloc(size);

float *h_B = (float *)malloc(size);

float *h_C = (float *)malloc(size);

for (int i = 0; i < n; ++i)

{

h_A[i] = rand() / (float)RAND_MAX;

h_B[i] = rand() / (float)RAND_MAX;

}

float *d_A = NULL;

float *d_B = NULL;

float *d_C = NULL;

cudaMalloc((void **)&d_A, size);

cudaMalloc((void **)&d_B, size);

cudaMalloc((void **)&d_C, size);

cudaMemcpy(d_A, h_A, size, cudaMemcpyHostToDevice);

cudaMemcpy(d_B, h_B, size, cudaMemcpyHostToDevice);

/*

int threadsPerBlock = 256;

int blocksPerGrid = (n + threadsPerBlock - 1) / threadsPerBlock;

printf("CUDA kernel launch with %d blocks of %d threads\n", blocksPerGrid, threadsPerBlock);

vector_add_kernel<<<blocksPerGrid, threadsPerBlock>>>(d_A, d_B, d_C, n);

*/

//ic_add(d_A, d_B, d_C, n);

add_test_s(d_A, d_B, d_C, n);

sub_test_s(d_A, d_B, d_C, n);

cudaFree(d_A);

cudaFree(d_B);

cudaFree(d_C);

free(h_A);

free(h_B);

free(h_C);

printf("Done\n");

return 0;

}

2. 总结

.cu 代码给 g++ 的 .cpp 的代码需要使用 extern "C" 来修饰,所以一template 函数的实例化不能一直贯彻到 .cu 源代码的最顶层;